Quantum computers are more fragile than most people realize. A single qubit — the quantum equivalent of a classical computer bit — doesn't just sit there holding its value. Its physical properties are constantly drifting. Its relaxation rate, which describes how quickly it loses its quantum state, can change dramatically within fractions of a second. A qubit that was performing reliably at the start of a computation may have silently degraded by the time it matters.

This instability has been a fundamental obstacle in scaling quantum computing. Now, researchers at the Niels Bohr Institute (NBI) at the University of Copenhagen, working in collaboration with NTNU Norway, Leiden University, and Chalmers University, have developed a system that monitors these fluctuations in real time — and does so roughly 100 times faster than previous techniques. The work was published in Physical Review X (DOI: 10.1103/gk1b-stl3).

The Problem: Qubits Change While You Watch Them

In classical computing, a bit is either 0 or 1, and it stays that way until you change it. In quantum computing, a qubit can be in a superposition of both states simultaneously — but maintaining that superposition is exquisitely difficult. Environmental noise, manufacturing imperfections, and even tiny stray electromagnetic fields can degrade a qubit's behavior.

One key parameter is T₁ (T-one), the relaxation time: how long a qubit can hold its quantum state before it decays. This parameter is not fixed. It fluctuates over time, sometimes quite dramatically. A qubit might have a T₁ of 100 microseconds at one moment and 20 microseconds a few milliseconds later.

"A good qubit can turn bad in fractions of a second," explains lead author Dr. Fabrizio Berritta, a postdoctoral researcher at NBI. "If your quantum processor doesn't know which qubits are currently behaving well, it can't route computations intelligently or decide which error correction resources to prioritize."

The Solution: An FPGA Running Bayesian Inference After Every Measurement

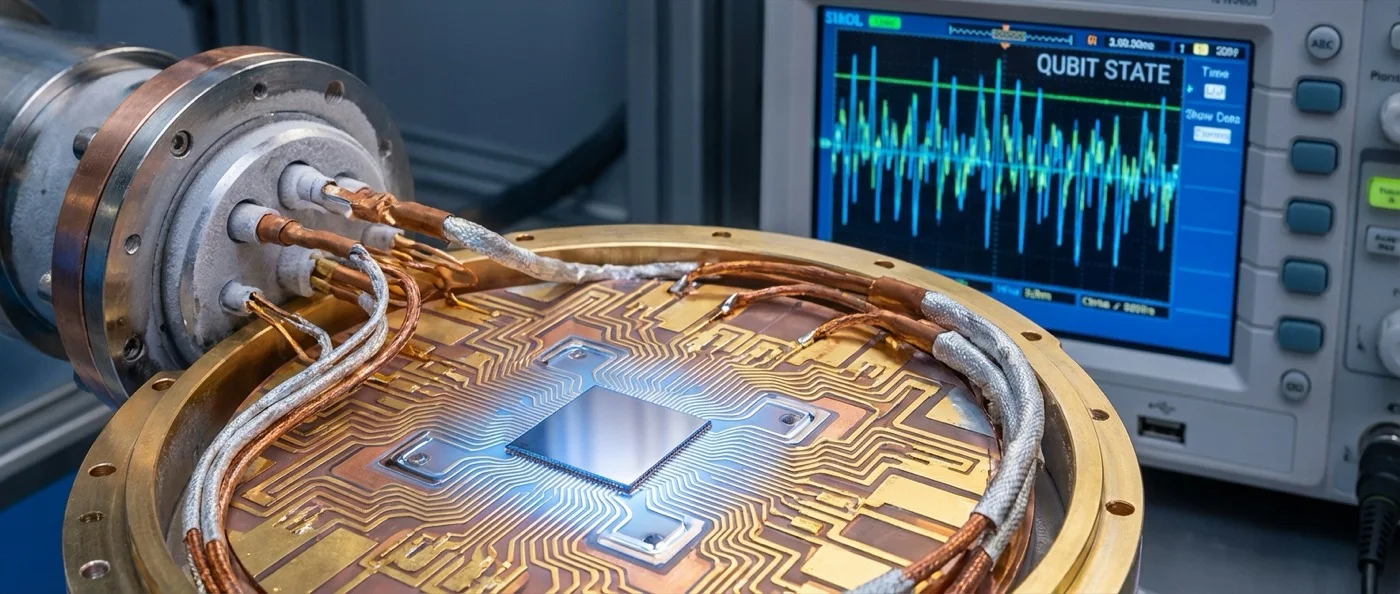

The NBI team's approach centers on replacing the conventional feedback loop — which involves transferring data from the quantum processor to a classical computer and back — with an FPGA (Field-Programmable Gate Array) that runs the entire inference algorithm on-chip, adjacent to the quantum hardware.

Specifically, they used an OPX1000 system from Quantum Machines, an FPGA-based quantum control system that can be programmed in a Python-like language with real-time pulse-level control. After each individual measurement of a qubit's state, the FPGA runs a Bayesian model update: it takes the prior estimate of the qubit's T₁, incorporates the new measurement result, and immediately outputs an updated posterior estimate. No data transfer to a remote computer. No waiting for software to process and respond.

"The FPGA updates the model after every single measurement," says group leader Prof. Morten Kjaergaard, Associate Professor at NBI's Center for Quantum Devices. "This allows us to track changes in qubit behavior at a timescale that simply wasn't accessible before."

~100× Faster Than Previous Methods

How much faster? The team demonstrates tracking of T₁ fluctuations on millisecond timescales — compared to the hundreds of milliseconds to seconds required by methods that rely on classical computer feedback loops. This is roughly a 100× improvement in temporal resolution.

In practical terms, this means a quantum processor using this system can maintain an up-to-date picture of which qubits are currently reliable, which have degraded, and how much time remains before a given qubit's state will collapse. This information can be used to dynamically route computations to the best-performing qubits at any given moment.

The quantum processing unit (QPU) used in the experiments was designed and fabricated at Chalmers University of Technology in Sweden, part of the broader international collaboration.

What Bayesian Inference Brings to Quantum Hardware

Bayesian inference is a statistical method for updating beliefs based on evidence. In this context, it works like this: the system starts with a prior probability distribution over possible T₁ values for a qubit. After each measurement, it updates this distribution based on what the measurement showed, producing a posterior distribution that represents the current best estimate of the qubit's relaxation rate.

By running this update after every single measurement — potentially thousands of times per second — the system builds an essentially continuous picture of how each qubit's T₁ is evolving. When a qubit's T₁ begins to drop (indicating that it is entering a “bad” state), the system knows immediately, rather than discovering it several seconds later after enough data has accumulated on a classical computer.

This is not just faster data processing — it fundamentally changes the relationship between the classical control system and the quantum hardware. The control system is no longer a passive observer that occasionally checks in; it becomes an active, real-time partner that knows the quantum hardware's current state at every moment.

Limitations: The Unexplained Fluctuations

The team is careful to note that the system, while a major advance, does not solve the underlying problem of qubit instability — it only tracks it. The physical mechanisms causing the T₁ fluctuations remain incompletely understood. The research reveals that a large fraction of the observed fluctuations cannot yet be explained by existing models of qubit noise.

"We can now see the fluctuations much more clearly, which is actually making the physics harder to explain," Berritta noted. "There are dynamics here that our current theoretical models don't fully account for." This is, in one sense, a feature rather than a bug: better measurement tools reveal physics that was previously hidden.

The Novo Nordisk Foundation Quantum Computing Programme and NBI's Center for Quantum Devices funded the research.

Implications for Fault-Tolerant Quantum Computing

The road to fault-tolerant quantum computers — machines that can run long, complex computations without errors destroying the result — requires solving several interrelated problems: better qubits, better error correction codes, and better classical control systems that can respond to the quantum hardware in real time.

This work addresses the third category directly. By giving the control system a real-time picture of qubit behavior, it enables smarter error correction strategies, adaptive compilation (choosing circuit routes in real time based on which qubits are currently good), and potentially longer coherent computations on existing hardware.

Quantum computing has long promised a future where certain calculations that would take classical computers millennia can be done in minutes. Getting there requires not just better qubits, but systems smart enough to watch them closely every millisecond. The NBI team has now built one.