Have you ever wondered how a robot vacuum avoids furniture? How an autonomous car “sees” pedestrians? Or how a waiter robot navigates a crowded restaurant without bumping into anyone? The answer lies in a combination of sensors, cameras, and artificial intelligence algorithms working in harmony.

📖 Read more: Recycling Robots: How AI Sorts Your Waste

In this article, we explain in detail the vision technologies used by modern robots, how each sensor works, and why 2026's robots are “smarter” than ever.

💡 Did you know? A modern robot can process over 1 million data points per second from its sensors, creating a 3D map of its environment in real time.

📊 Robot Vision Technology in Numbers

The robotics sensor market is growing rapidly. Let's look at some statistics that show the scale of this technological revolution.

🔵 LiDAR: The “Laser Eyes” of Robots

LiDAR (Light Detection and Ranging) is the most widely used technology for robots that need accurate mapping of their environment. It works by emitting thousands of laser beams per second and measuring the time they take to return after reflecting off objects.

LiDAR Sensor

Light Detection and RangingLiDAR creates a "point cloud" - a 3D representation of the space with millions of points. Each point represents a distance and angle, allowing the robot to “see” everything around it with millimeter precision.

Robots typically use 2D LiDAR (a single scanning plane) or 3D LiDAR (multiple planes). Tesla, Waymo, and other autonomous vehicles use 3D LiDAR that costs thousands of euros, while household robots (like Roomba) use simpler 2D systems costing just €20-50.

📷 Depth Cameras: Human-Like Vision

Depth cameras work similarly to human eyes: they use two or more lenses to calculate object distances through stereoscopic vision.

Depth Camera

RGB-D / Stereo VisionDepth cameras combine color (RGB) with depth (D), allowing the robot not only to “see” objects but also to recognize them. A robot with a depth camera can distinguish a person from a piece of furniture, a pet from a toy.

Examples of depth camera technology include Intel RealSense, Microsoft Azure Kinect, and Orbbec. Robots like Boston Dynamics Spot use 5 depth cameras for 360° coverage.

🔊 Ultrasonic Sensors: Bat Technology

Ultrasonic sensors work like bat sonar or dolphins: they emit high-frequency sound waves (beyond the human ear) and measure their return time.

Ultrasonic Sensor

Sonar TechnologyUltrasonic sensors are simple, cheap, and reliable. They are not affected by light, color, or object transparency. A glass pane that would “fool” a camera is easily detected by ultrasound.

📖 Read more: Robot Lawn Mowers 2026: Autonomous Garden Care

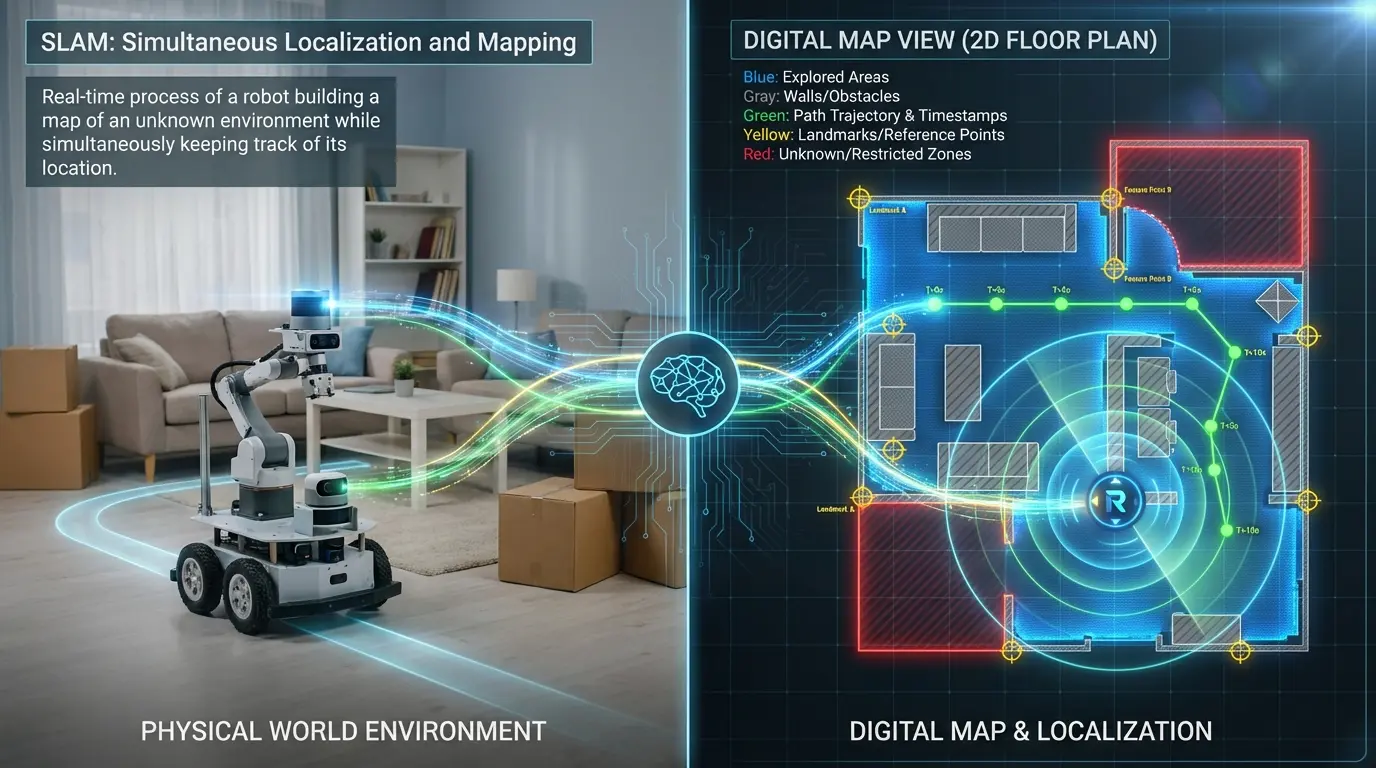

🧠 SLAM: The Brain Behind the Vision

All these sensors would be useless without the SLAM (Simultaneous Localization and Mapping) algorithm. SLAM allows the robot to:

- Map the space while moving

- Locate its position on the map

- Update the map in real time

- Plan the optimal path

Think of it this way: Imagine walking into an unknown room with your eyes closed, touching everything with your hands. Gradually, you create a “mental map” of the space. That's what SLAM does - but millions of times faster and more accurately.

📊 Sensor Technology Comparison

| Feature | LiDAR | Depth Camera | Ultrasonic |

|---|---|---|---|

| Cost | €50 - €10.000 | €100 - €500 | €2 - €20 |

| Accuracy | ±2cm | ±5cm | ±3cm |

| Range | 1-100m | 0.5-10m | 0.02-4m |

| Darkness | ✅ Yes | ❌ No (except IR) | ✅ Yes |

| Recognition | ❌ Shape only | ✅ Full | ❌ No |

| Use | Mapping | Recognition | Avoidance |

🏠 Everyday Use Examples

🤖 Robot Vacuums (Roomba, Roborock)

They use LiDAR + ultrasonic + bump sensors. LiDAR maps the home, ultrasonics detect stairs and glass obstacles, and bump sensors are the last line of defense against unexpected obstacles.

🚗 Autonomous Vehicles (Tesla, Waymo)

They combine 8+ cameras, LiDAR, radar, and ultrasonics. Tesla relies primarily on cameras with AI ("Tesla Vision"), while Waymo uses expensive 3D LiDAR systems.

🍽️ Waiter Robots (BellaBot, Servi)

They feature 2D LiDAR + cameras + ultrasonics for navigating crowded spaces. AI recognizes people and predicts their movements.

⚖️ Advantages and Disadvantages

✅ Advantages

- ✓ Accurate space mapping

- ✓ Real-time obstacle avoidance

- ✓ 24/7 operation without fatigue

- ✓ Improvement through machine learning

- ✓ Safety for humans

❌ Disadvantages

- ✗ High cost (LiDAR)

- ✗ Weather conditions (rain, fog)

- ✗ Reflective surfaces

- ✗ Energy consumption

- ✗ Outdoor limitations

🔮 The Future of Robot Vision

The next generation of sensors will bring event cameras (cameras that record only changes), thermal imaging for night vision, and neuromorphic sensors that mimic the human brain. Apple, Meta, and Nvidia are investing billions in these technologies.

By 2030, robots will be able to “see” better than humans in almost every condition - from total darkness to snowstorms. Robot vision isn't just technology - it's the foundation for a future where machines and humans coexist harmoniously.