Logistics, finance, network design: the NP-hard problems quantum algorithms are expected to solve. How close are we in practice?

🔢 The problem: why some questions can't be answered

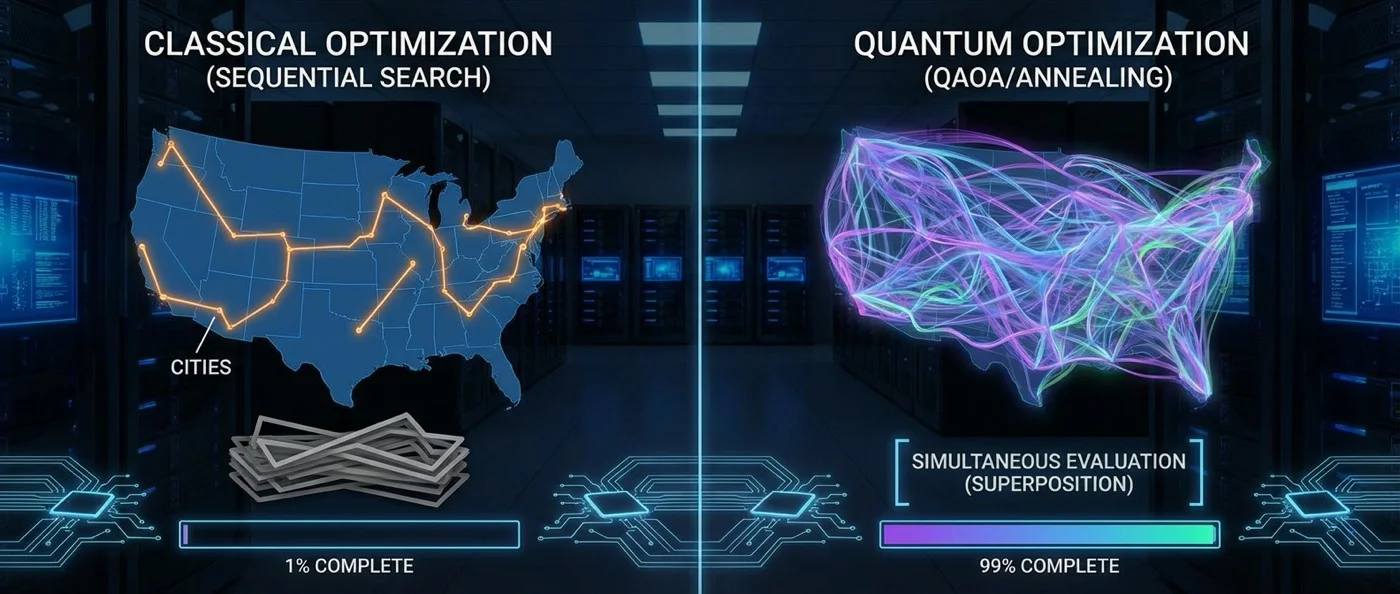

Imagine a delivery network with 50 cities. A driver must visit each city exactly once and return to the starting point, choosing the shortest route. It sounds simple — but the number of possible routes is 50! (50 factorial), roughly 3 × 1064. Even the fastest classical supercomputer would need longer than the age of the universe to examine every possibility.

This is an example of an NP-hard problem — the famous Travelling Salesman Problem (TSP). It's not the only one: graph coloring, Max-Cut, Boolean satisfiability (SAT), vehicle routing, telecommunications network design, portfolio optimization. All of them belong to a category of problems that, according to computational complexity theory, cannot be solved optimally in polynomial time. Quantum physics promises to change this — at least in part.

⚙️ The quantum approach: two paths

Quantum optimization follows two main strategies, corresponding to two different types of quantum computers.

Quantum annealing

Quantum annealing exploits quantum tunneling to find the global minimum of a cost function. Unlike classical simulated annealing, which relies on thermal fluctuations to overcome energy barriers, the quantum version can pass through them. As first described by Ray, Chakrabarti, and Chakrabarti (1989) in Physical Review B, the quantum tunneling probability depends not only on the height Δ of a barrier but also on its width w, providing an additional advantage: if the barriers are “tall but narrow,” quantum annealing overcomes them far more effectively.

The method was formally formulated by Kadowaki and Nishimori in 1998, while the first experimental demonstration took place in 1999 in LiHoYF magnets. The algorithm begins from a quantum superposition of all possible solutions with equal weights, then evolves the system according to the time-dependent Schrödinger equation. If the change is slow enough, the system remains close to the ground state of the instantaneous Hamiltonian — this is the basis of adiabatic quantum computation.

🔢 How are problems encoded?

NP-hard problems are converted into QUBO (Quadratic Unconstrained Binary Optimization) or Ising model form. Andrew Lucas (2014, Frontiers in Physics) showed how many NP-hard problems — TSP, Max-Cut, graph coloring, SAT — can be encoded as Ising models. The solution corresponds to finding the minimum energy state of a spin glass.

QAOA (Quantum Approximate Optimization Algorithm)

The second path is algorithmic, designed for gate-based quantum computers. QAOA, proposed by Farhi, Goldstone, and Gutmann in 2014, uses two Hamiltonians: a “cost” Hamiltonian (HC), whose ground state encodes the optimal solution, and a “mixer” Hamiltonian (HM), which ensures exploration of the solution space. By alternately applying these operators to a superposition state, the algorithm approaches the optimal solution.

Theoretically, QAOA guarantees convergence to the optimal value if the number of layers p is large enough — this is proven by the adiabatic theorem. In practice, a 2023 study in npj Quantum Information (Lykov, Wurtz, Poole, Saffman et al.) showed that p > 11 is needed for scalable advantage on the MaxCut problem. Furthermore, Dalzell, Harrow, Koh, and La Placa (2020) calculated that a QAOA circuit with just 420 qubits and 500 constraints would take at least a century to simulate on classical supercomputers — sufficient for “quantum supremacy.”

🏭 D-Wave: the first commercial quantum optimizer

Canadian company D-Wave Systems brought theory to market. In 2011, it announced the D-Wave One, the first commercial quantum annealer, with a 128-qubit processor. The first buyer was Lockheed Martin. In 2013, a consortium of Google, NASA, and the Universities Space Research Association purchased a 512-qubit system. By 2015, Google announced that the D-Wave 2X outperformed both simulated annealing and Quantum Monte Carlo methods by a factor of 100,000,000 on specific optimization problems.

However, the effectiveness was disputed. An extensive 2014 study in Science, led by Matthias Troyer (ETH Zurich), was characterized as “the most thorough study ever done” on a D-Wave processor. The conclusion: “no quantum speedup” across the entire range of tests, though the possibility of advantage in future tests or different problem categories was not ruled out.

"The subtle nature of the quantum speedup question" — the ETH Zurich study in Science (2014) underscored that evaluating quantum acceleration critically depends on how it's defined and measured, something that remains an open research question.

— Matthias Troyer, ETH Zurich / Science (2014)Importantly, the D-Wave architecture is not a universal quantum computer. It cannot run algorithms like Shor's or Grover's. However, at Qubits 2021, D-Wave announced it is developing its first universal quantum computer, capable of running gate algorithms (QAOA, VQE) in addition to quantum annealing.

🚀 Applications: from logistics to chemistry

The sectors where quantum optimization could shine include:

- Logistics & supply chain: fleet routing, warehousing, delivery scheduling — all classic NP-hard problems.

- Finance: portfolio optimization, risk assessment, derivatives pricing.

- Telecommunications: frequency allocation, network design, interference minimization.

- Pharmaceuticals & chemistry: protein structure prediction, molecular design, clinical trial optimization.

- Energy grids: optimal load distribution in electrical networks, renewable energy integration.

Already, 1QBit — the first company dedicated to software for commercial quantum computers — launched partnerships: with the DNA-SEQ Alliance for cancer research, and with financial firms. Meanwhile, NASA, Google, and teams at Texas A&M, USC, and LANL are searching for problem categories where quantum annealing shows clear advantage.

🔄 Hybrid models: the bridge to the future

Given the limitations of current technology (decoherence, noise, limited qubit count), the most realistic approach is hybrid quantum-classical models. In these, the quantum processor handles the hardest part (exploring the energy landscape), while a classical computer handles parameter optimization. A 2020 study in Computers & Chemical Engineering (Ajagekar, Humble, You) demonstrated such hybrid strategies for large-scale problems, while a 2023 publication in Scientific Reports applied hybrid quantum computing to community detection in brain networks.

The VQE (Variational Quantum Eigensolver) algorithm follows a similar philosophy: the quantum computer prepares candidate states, the classical one optimizes parameters. This makes it ideal for today's noisy (NISQ) quantum processors.

📊 How close are we really?

The honest answer: closer than a decade ago, but still far from practical advantage at industrial scale. Quantum annealing shows promise in specialized benchmarks, but categorical proof of quantum speedup remains elusive. QAOA needs deeper circuits than today's noise levels allow.

The real change may come with quantum error correction and the transition from NISQ to fault-tolerant processors. Then, algorithms like QAOA can be executed at sufficient depth to surpass every classical alternative.

Until then, quantum optimization is not merely theory — it's an active field with real hardware (D-Wave Advantage with 5,000+ qubits), real companies (1QBit, IBM, Google), and real experiments. The question is no longer whether quantum optimization will work, but when — and on which problems first.