Three years after GPT's public debut — OpenAI just dropped something completely different. OpenAI O1, codenamed "Strawberry" until recently, isn't just a bigger model. It's what the company calls the first step toward artificial intelligence that can actually think.

📖 Read more: Elon Musk Sues OpenAI for $134 Billion: Epic AI Battle

🧠 Reasoning Models: Trading Speed for Smarts

The core bet behind **OpenAI O1** is simple in theory, revolutionary in practice. Instead of spitting out an answer immediately — like every large language model does — O1 takes its time. It thinks. It analyzes. It thinks again. Mira Murati, OpenAI's CTO, calls it "a new paradigm for models." She's not overselling. When you feed it a math problem that would stump GPT-4o, O1 doesn't respond. You wait. After seconds — or minutes for tough problems — you get a solution that actually works. On the International Mathematical Olympiad (IMO), the results speak volumes: **83% success rate for O1 versus 13% for GPT-4o**. In the AI world of 2026, that's like watching someone double the 100-meter sprint record.83% Math Success Rate (IMO)

89% Codeforces Percentile

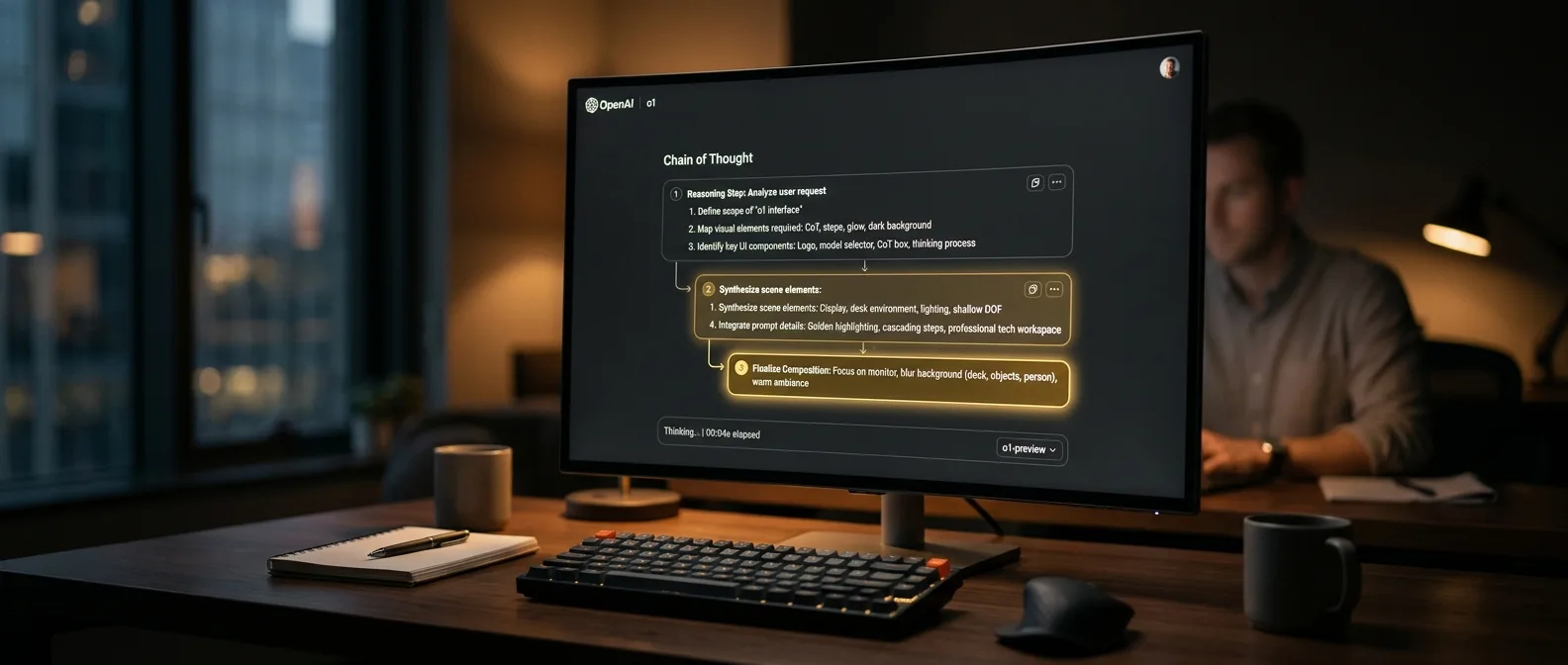

⚡ Chain-of-Thought: The Secret Sauce

Behind this dramatic improvement lies a technique that sounds familiar: **chain-of-thought reasoning**. The difference? Now you don't add it to your prompt — the model does it internally, by itself. The training process combined this technique with reinforcement learning. Simply put: the model gets positive feedback when it thinks correctly, negative when it stumbles. Like a kid learning to solve math problems. Mark Chen from OpenAI puts it bluntly: "The model learns to think for itself, instead of mimicking how humans think." This means something significant. Traditional LLMs rely on experience — they've seen similar problems and reproduce solutions. O1 can tackle problems it's never encountered before.The Speed Paradox

But there's a flip side to this success. O1 is slow. While GPT-4o throws answers at you in seconds, O1 needs time to think. For a programmer trying to solve a complex algorithm? The wait pays off. For casual conversation? Probably not.📖 Read more: GPT-5 Codex: What the Newest OpenAI Model Brings

🔬 Benchmarks That Impress

The numbers tell their own story. On the MMLU benchmark — a general knowledge test considered the gold standard — O1 scored **92.3 points**. On the MATH benchmark for mathematics, it hit **94.8**. But the most impressive part? In physics, chemistry, and biology tests at PhD level, the model gave answers matching those of doctoral students.On Codeforces — a programming competition that scares even experienced developers — O1 reached the 89th percentile. GPT-4o? The 11th."This is what we consider the new paradigm for models. It's much better at handling complex reasoning tasks."

Mira Murati, CTO OpenAI

Limitations That Matter

Before you get too excited, O1 has serious constraints. It can't browse the web. It doesn't accept images or audio. It's strictly text-only. For a researcher trying to solve a complex mathematical problem, these drawbacks are negligible. For the everyday user? Less so.📖 Read more: Mira Murati Loses 2 Co-Founders - They Return to OpenAI

📊 OpenAI's New Strategy

O1 doesn't replace GPT-4o. It complements it. OpenAI presents two parallel paths: the scaling paradigm (bigger models, more data) and the reasoning paradigm (better thinking). Murati confirmed that GPT-5 — which is coming — will combine both approaches. A larger model with built-in reasoning capabilities.O1-Preview

Full reasoning capability, more expensive

O1-Mini

80% cheaper, specialized for code and math

Safety as a Bonus

An unexpected benefit of reasoning: better safety. In jailbreaking tests — attempts to bypass safety measures — O1 scored **84/100**. GPT-4o? A dismal **22/100**. The explanation makes sense: a model that thinks about the consequences of its actions is less likely to produce harmful content.🎯 Competition and Future

Google isn't sitting idle. AlphaProof — which combines language models with reinforcement learning for math problems — shows others are moving in the same direction. But O1 seems to have an edge in generalization. It's not just good at math — it's good at everything that requires reasoning. Noah Goodman from Stanford points out something important: the ability to trade speed for accuracy would be "nice progress." That's exactly what O1 does.Real Example: O1 solved this math puzzle that stumped GPT-4o: "A princess is as old as the prince will be when the princess will be twice as old as the prince was when the princess's age was half the sum of their current ages."

Answer: The prince is 30, the princess is 40.

Answer: The prince is 30, the princess is 40.

The Cost of Intelligence

One of O1's biggest bets is cost. Mark Chen argues that the new approach allows them to "deliver intelligence more cheaply." If this proves true, the implications are massive. It means you don't need to burn half a data center to get smart AI — you just need to make it think.📖 Read more: OpenAI & Cerebras: $10 Billion Compute Power Deal