The Largest Compute Deal in AI History

OpenAI, the company behind ChatGPT, announced an unprecedented deal with Cerebras Systems, a startup specializing in building massive AI processors. The $10 billion deal represents the largest investment in computing infrastructure in the history of artificial intelligence.

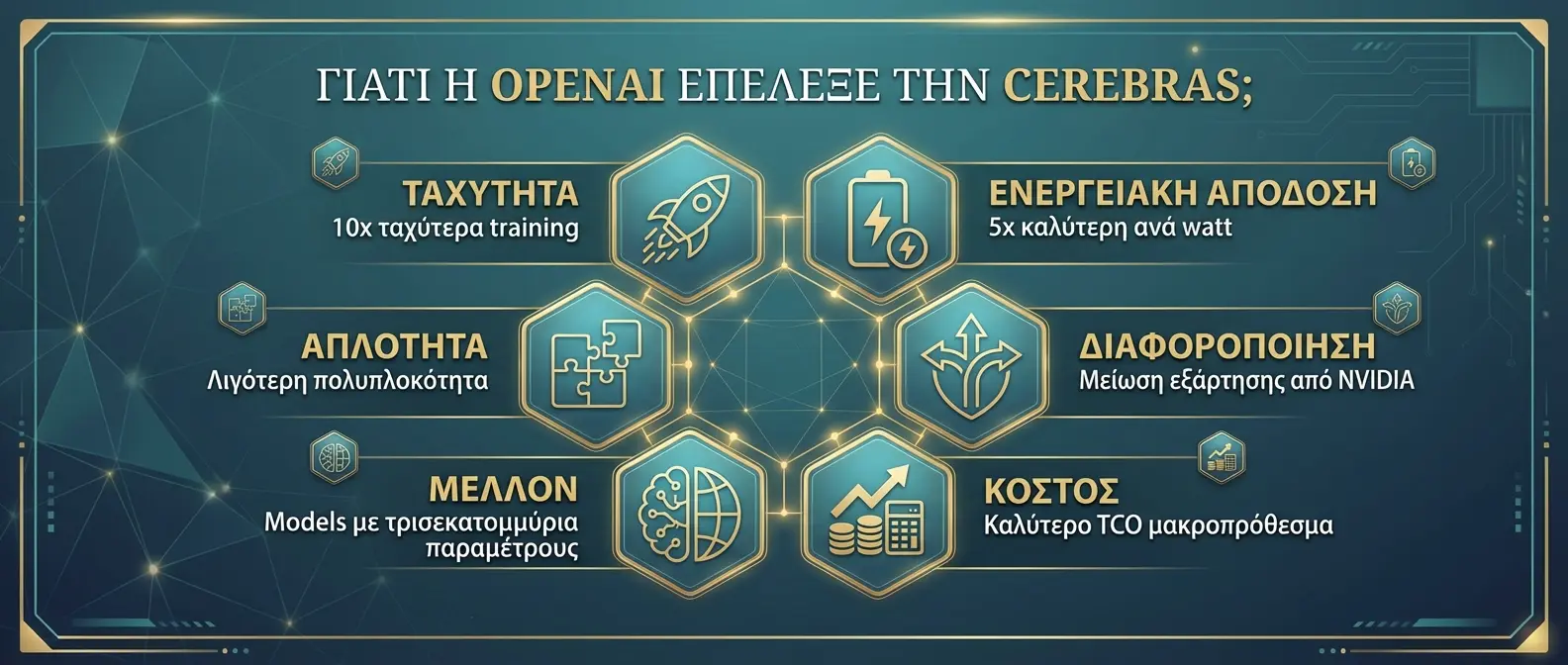

This move marks a strategic shift for OpenAI, which until now relied primarily on NVIDIA GPUs. The partnership with Cerebras gives the company access to an entirely different computing architecture.

📖 Read more: AI Energy: Reducing Power Consumption

Who is Cerebras?

Cerebras Systems was founded in 2016 with a bold goal: to create the world's largest processor. The result was the WSE (Wafer-Scale Engine), a chip that occupies an entire silicon wafer — 56 times larger than the biggest GPUs.

🤖 OpenAI

- Founded: 2015, San Francisco

- CEO: Sam Altman

- Products: ChatGPT, GPT-4, DALL-E, Sora

- Valuation: ~$100B

- Users: 200+ million

🔧 Cerebras Systems

- Founded: 2016, Sunnyvale CA

- CEO: Andrew Feldman

- Product: WSE-3 Wafer-Scale Chip

- Valuation: ~$8B

- Employees: 450+

📖 Read more: Elon Musk Sues OpenAI for $134 Billion: Epic AI Battle

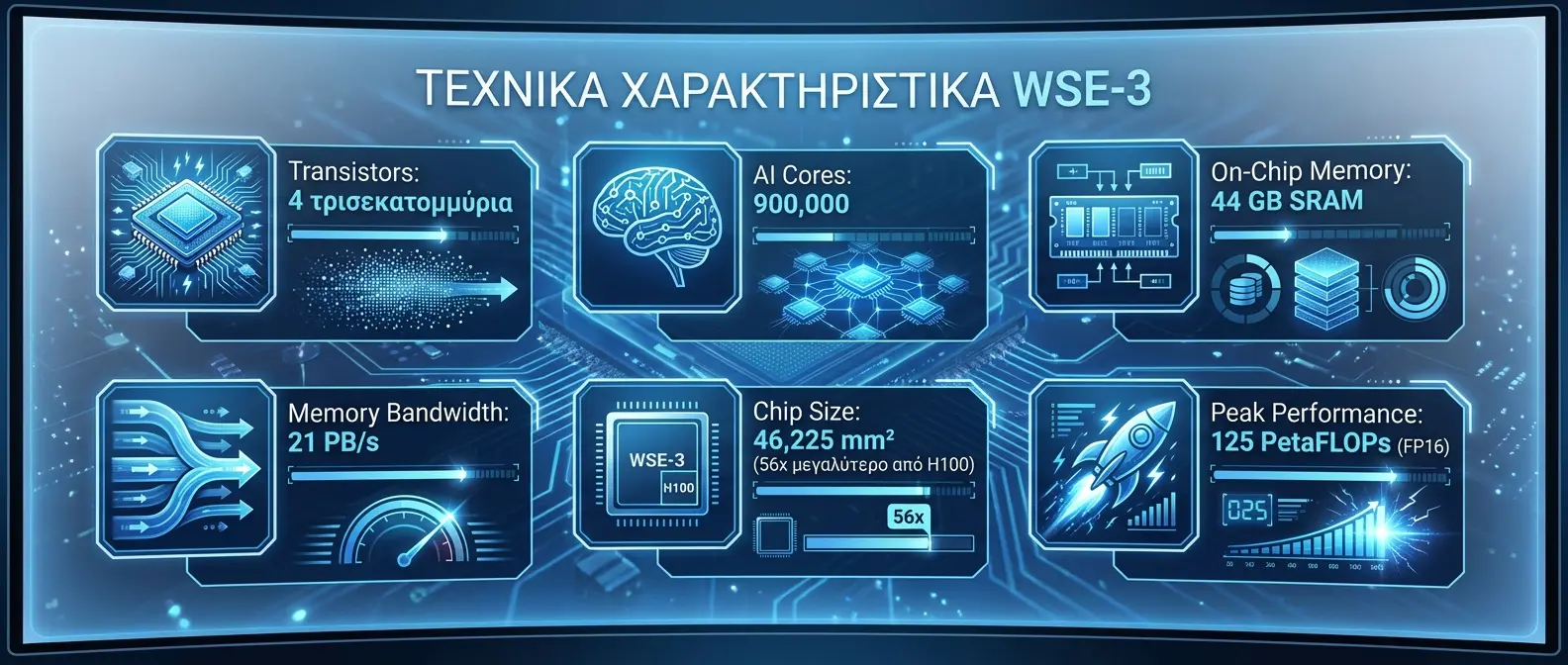

What is the WSE-3?

Cerebras' Wafer-Scale Engine 3 (WSE-3) is the largest and most powerful AI processor ever built. Instead of cutting a silicon wafer into many small chips, Cerebras uses the entire wafer as a single unified chip.

🔧 WSE-3 Technical Specifications

This partnership will allow us to train models that were previously impossible to imagine. Cerebras' architecture is ideal for next-generation AI.

Why Did OpenAI Choose Cerebras?

🎯 Strategic Reasons

📖 Read more: Mira Murati Loses 2 Co-Founders - They Return to OpenAI

Implementation Timeline

What Does This Mean for Competition?

This deal changes the AI hardware landscape. NVIDIA, which dominates the market with 80%+ share, now faces a new competitor. Companies like Google, Microsoft, and Amazon will likely reassess their own strategies.

Meta is already developing its own chips, while Google is investing in TPUs. OpenAI's move toward Cerebras shows that the AI infrastructure market is becoming increasingly diverse.

🔮 Conclusion

The OpenAI-Cerebras deal marks a turning point for the AI industry. With a $10 billion investment in wafer-scale computing, OpenAI is betting on the future of computation.

The big question: Will Cerebras' architecture be able to meet the demands of GPT-5? The next 18 months will provide the answer.