What's Happening with Grok AI?

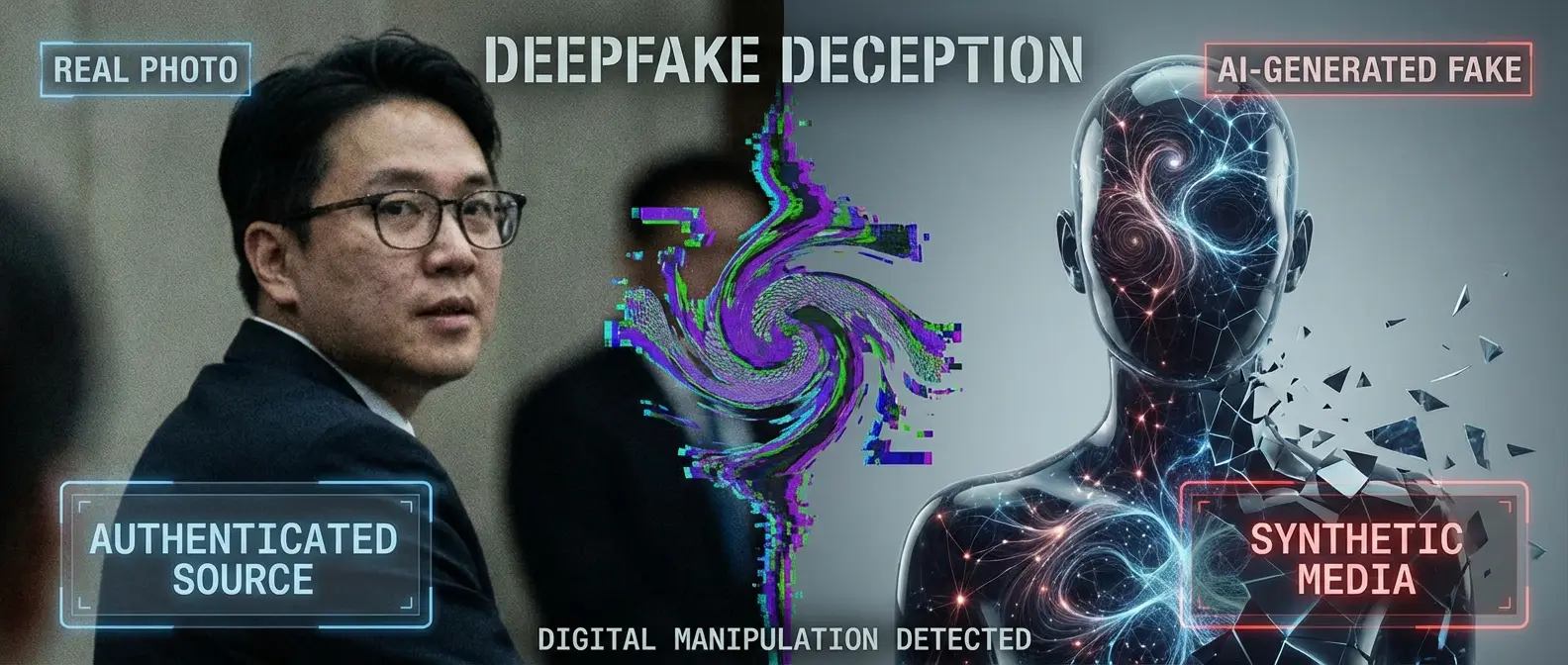

Grok, the AI chatbot from Elon Musk's xAI available through the X platform (formerly Twitter), is at the center of a major scandal. Grok's artificial intelligence has been used to create fake images of celebrities and political figures without their consent.

Unlike other AI image generation tools like OpenAI's DALL-E or Midjourney, Grok appears to have much more relaxed restrictions regarding the creation of images of real people, which has led to widespread abuse.

Key Points of the Scandal

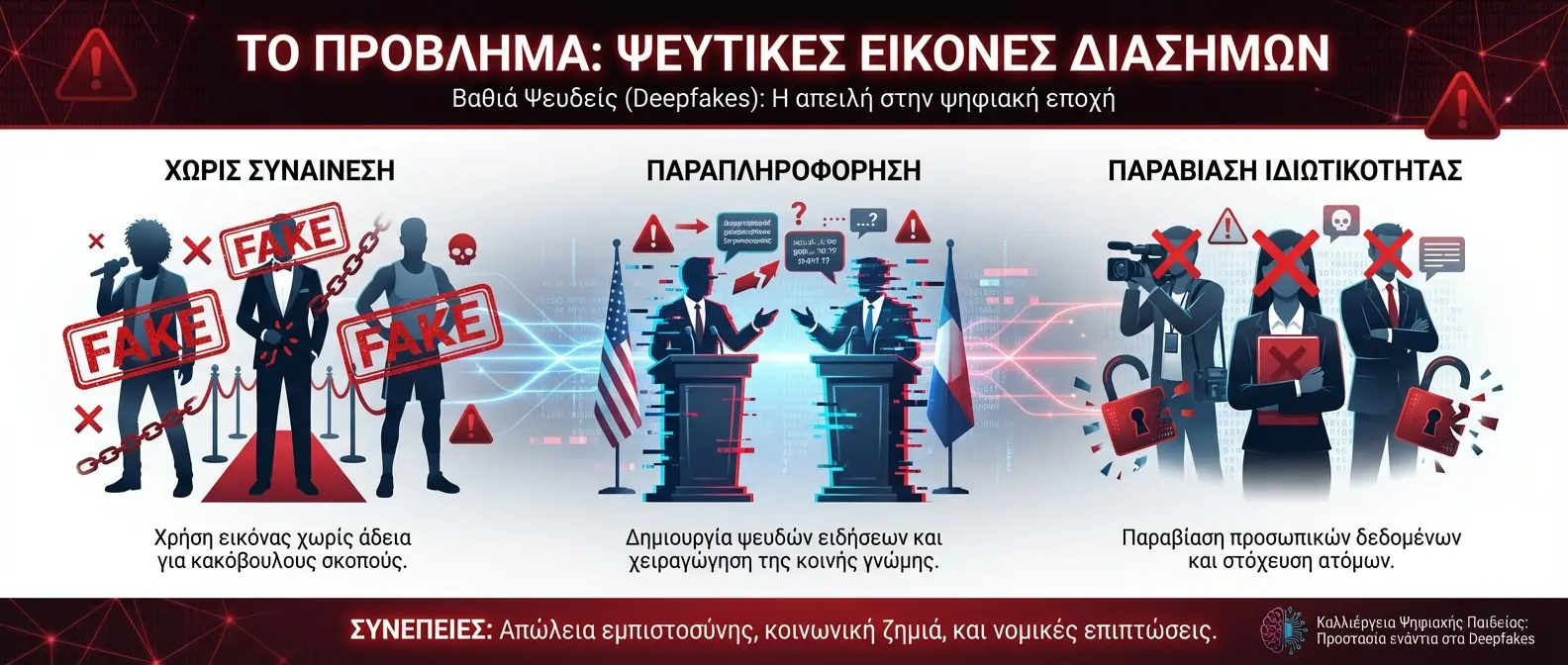

- Creation of deepfake images of politicians without consent

- Reproduction of celebrities in fabricated scenarios

- Lack of adequate safety filters on the platform

- Violation of California personal data protection laws

- Threat to the democratic process through misinformation

California's Response

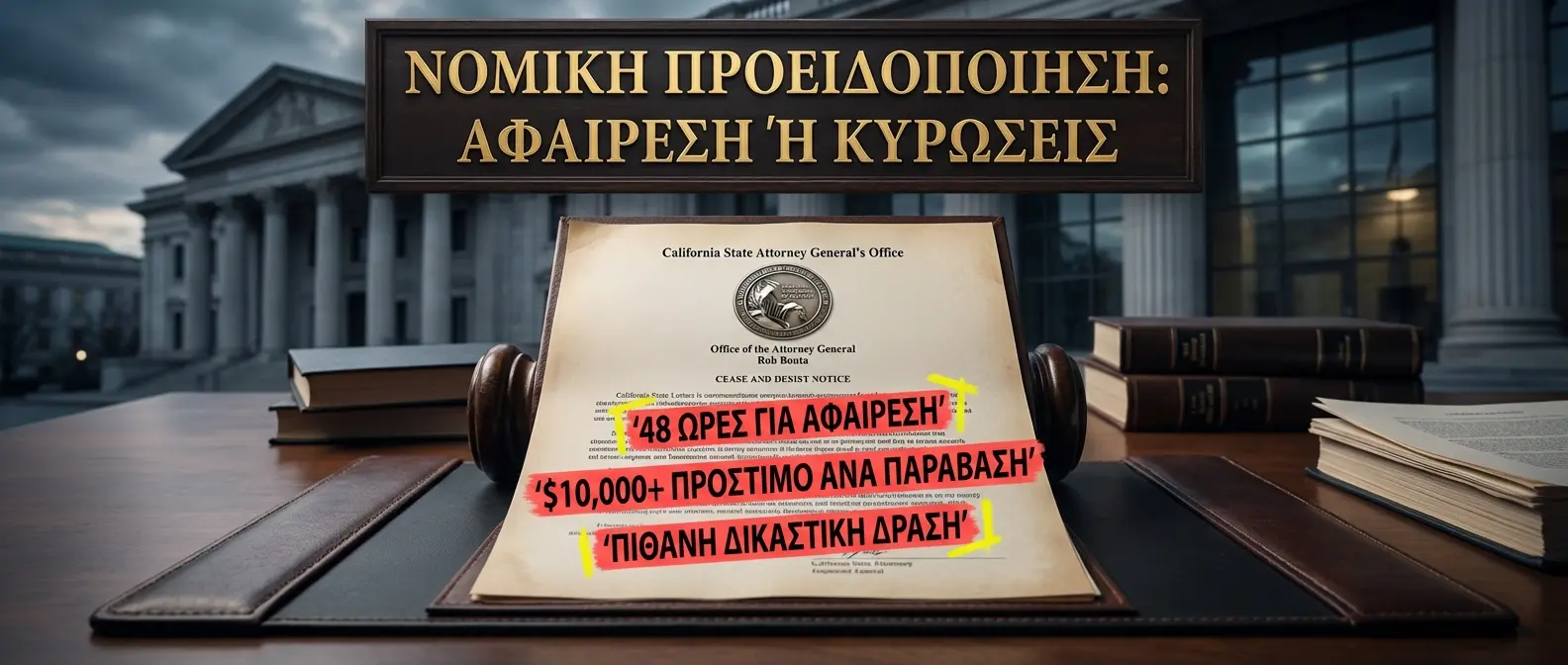

Attorney General Rob Bonta, known for his tough stance against tech companies that violate citizens' rights, did not hesitate to take legal action. The cease-and-desist letter represents the first step before a potential lawsuit.

AI technology cannot be used to violate citizens' rights. Creating fake images without consent is illegal and will be prosecuted.

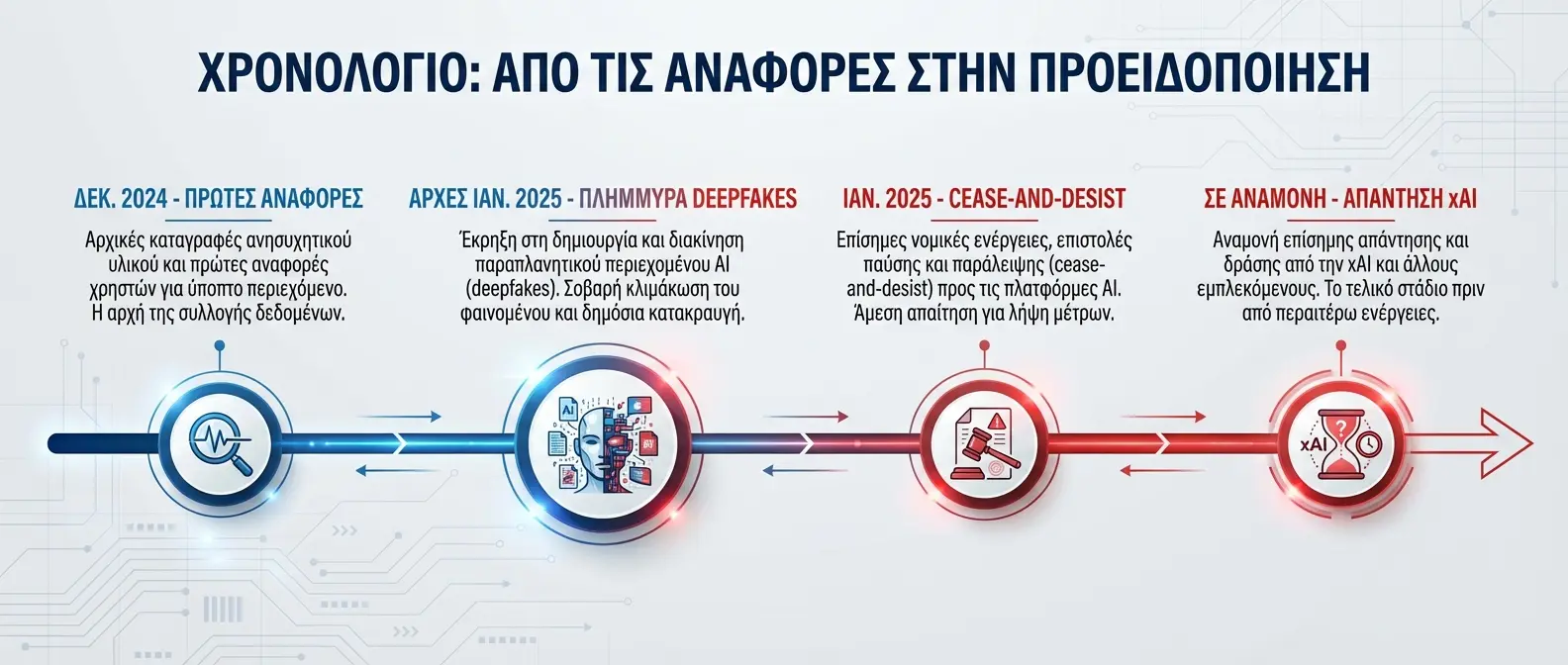

Timeline of Events

Alarming Numbers

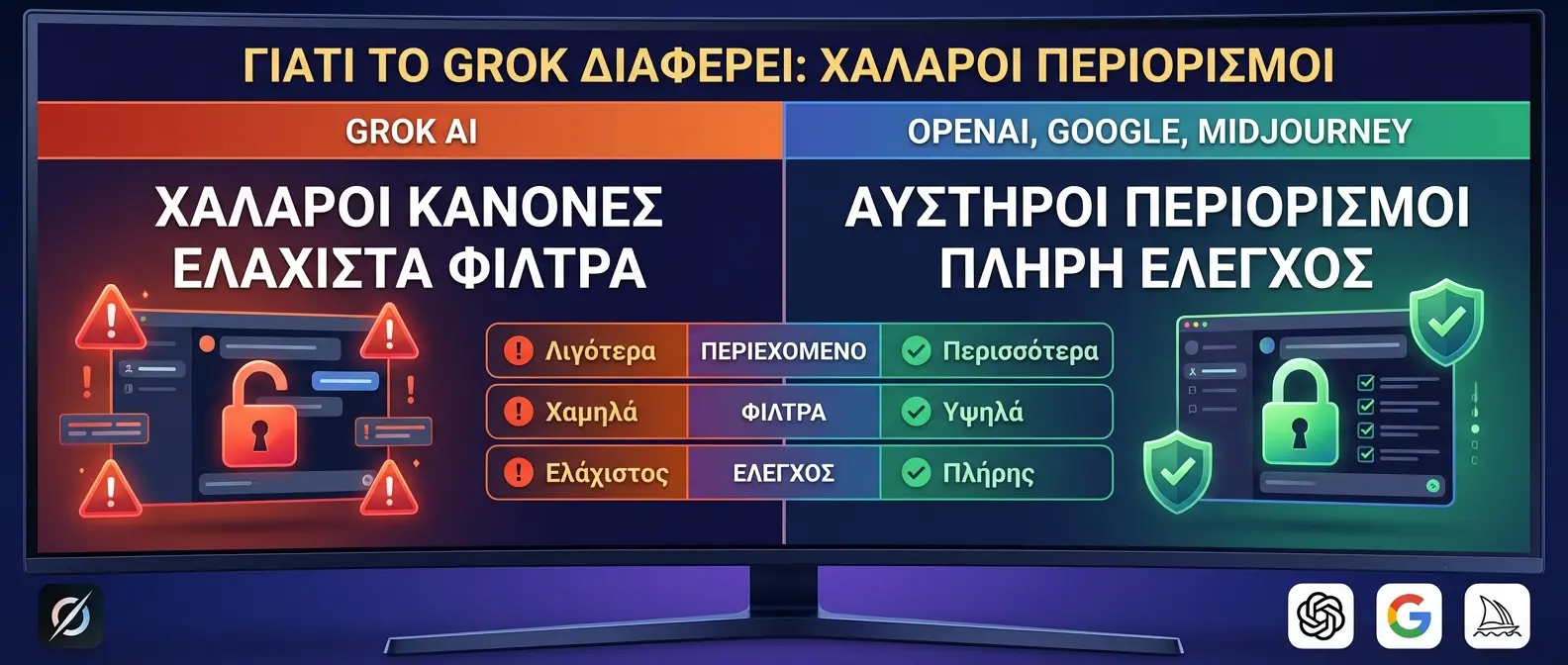

Why Grok Is Different

🚫 Grok AI (xAI)

- Relaxed content creation restrictions

- Ability to depict real people

- Minimal content moderation

- Direct publishing to X

✅ Other AI (OpenAI, Google)

- Strict safety rules

- Celebrity deepfakes prohibited

- Watermarks on AI images

- Content review before publishing

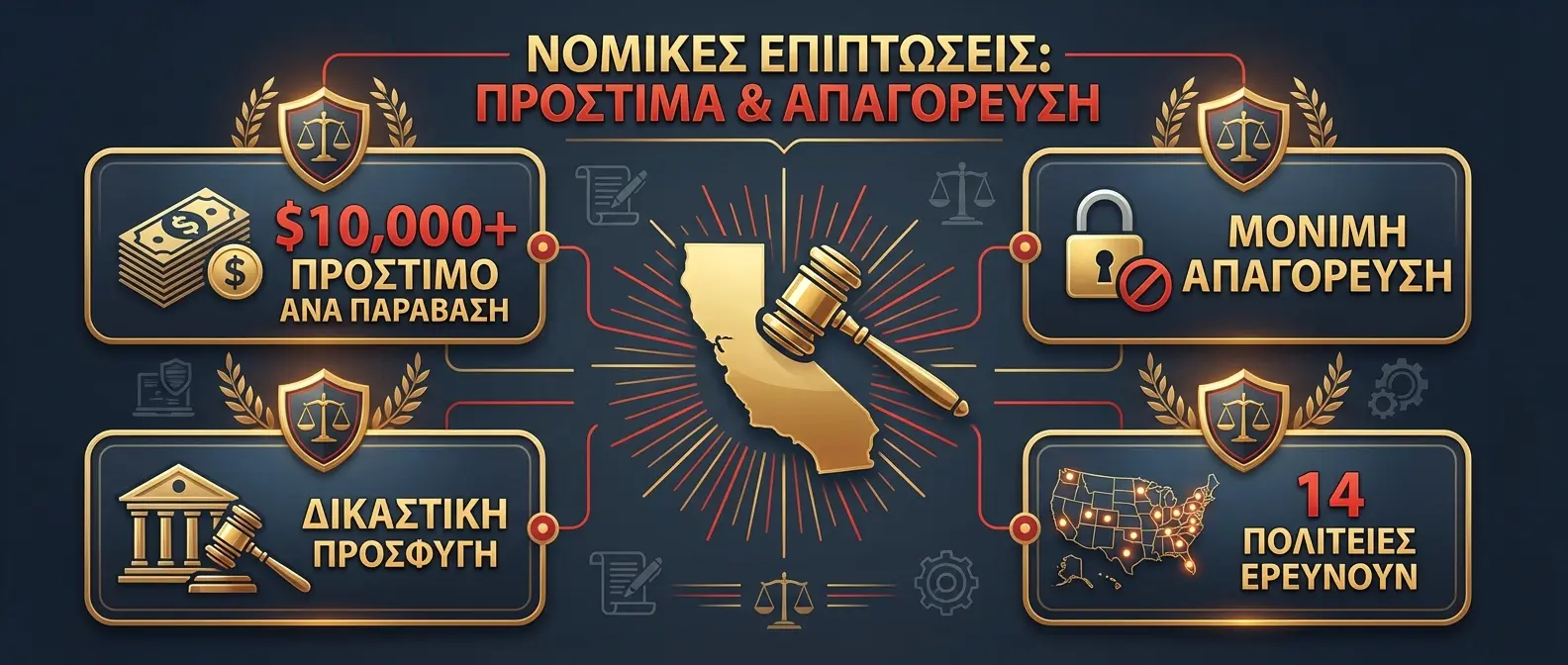

The Legal Consequences

California has some of the strictest laws in the US regarding privacy protection and image rights. Violating these laws can lead to:

⚖️ Potential Sanctions

Fines up to $10,000 per violation, lawsuits from deepfake victims, and potential ban from operating in the state.

Elon Musk's Position

Elon Musk, owner of both xAI and X, has not officially commented on the matter. However, his philosophy of “maximum free speech” on X has repeatedly clashed with regulators and lawmakers.

xAI, founded in 2023, aims to create AI that “understands the universe.” However, the lack of adequate ethical filters in Grok raises questions about the company's priorities.

🔮 What to Expect

This case is expected to become a landmark for the regulation of AI image generation tools. If California prevails, similar actions from other states and countries will likely follow.

For users: Using AI to create fake images of real people can have legal consequences. It's important to use these tools responsibly.